On CLIs vs. MCP

A meme which was posted during the CLI vs. MCP debate.

MCP, originally introduced by Anthropic in late 2024, is an open standard/protocol that allows AI models to connect to external data sources, tools, and services in a structured, standardized way (think of it as a "USB-C for AI"). It uses servers that expose schemas, tools, resources, and prompts, enabling deterministic calls, type safety, discoverability, and better integration across different AI clients (e.g., Claude, ChatGPT, IDEs). It's designed for reliability, security (e.g., scoped permissions), and avoiding custom glue code for every model-tool pair.

CLIs, in contrast, involve giving the AI direct access to run existing terminal commands (git, npm, docker, AWS CLI, but also Unix commands such as ls, cat, etc.) in the shell/environment where the agent operates. This leverages the fact that modern models already "understand" shell commands well.

Key Points of the Debate

The discussion has heated up in March 2026, with threads, benchmarks, and announcements highlighting practical trade-offs:

- Token/Context Efficiency - MCP servers often load full tool schemas into the model's context on every session or turn, which can consume significant tokens (some reports cite 70-90k+ tokens per task or up to 72% of the window before user input even starts). CLIs avoid this overhead-no schema bloat, direct execution, lower latency, and cheaper/faster in many cases.

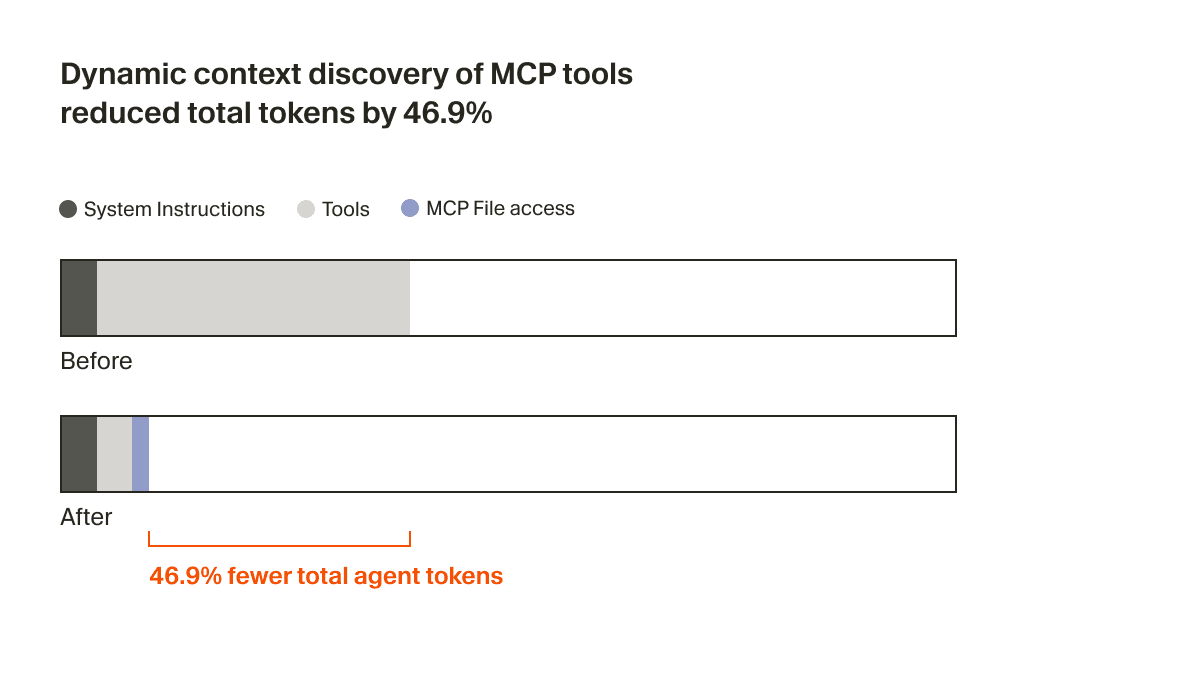

However, companies like Cursor came out with some clever tricks to still make MCP servers token-efficient. The idea is to simply store all tool descriptions on the filesystem, and only inform the coding agent about the short tool names. When needed, the agent can look up the tools when the task calls for it.

Cursor's dynamic context discovery approach.

Composable - CLIs enable coding agents to pass the outputs of various tool calls directly to one another via the pipe operator (

|). e.g.cat server.log | grep ERROR | sort | uniqenables a coding agent to read a server log, keep only error lines, sort them and remove duplicates, all in one go.Performance & Reliability in Practice - Many developers report that CLIs "just work" better for real workflows (e.g., editing files, running tests, git ops, deploying). MCP can introduce issues like misread schemas, tool selection errors, looping, higher token burn, and debugging friction (e.g., JSON-RPC errors). Some builders have shifted to CLI wrappers for production reliability, calling MCP more idealistic or enterprise-oriented.

Interpretability & Debugging - CLIs/scripts are readable and debuggable by both humans and agents (the agent can inspect runtime). MCP tool executions are often more opaque/hidden.

Security & Control - MCP wins here with structured permissions, sandboxing, limited actions, and auditing. Direct CLI access is riskier (potential for destructive commands), though wrappers can mitigate this.

When to Use Which - Not a strict either/or:

- Use CLI for local/dev workflows, tools the model already knows (git/npm/etc.), speed, and simplicity.

- Use MCP for standardized integrations, remote/enterprise tools, multi-client compatibility, type-safe chaining, or when strict boundaries are needed.

- Hybrids appear common: CLI for execution, MCP for discovery/interface in some setups.

Influential voices (e.g., Perplexity's CTO announcing a shift away from MCP internally due to context waste and auth friction, various builders sharing "CLI just works" experiences, and benchmarks showing CLI sometimes using more/less tokens depending on implementation) have fueled the "MCP is fading" narrative, while others defend it as foundational for mature ecosystems.

Overall, the consensus emerging on X is that the debate isn't binary-MCP isn't "dead," but CLI approaches have gained momentum for pragmatic, token-efficient agentic coding in 2026, especially as models improve at handling terminals directly. It's practicality vs. standardization, with many landing on "use the right tool for the job" or combined approaches. The conversation remains active, with fresh threads and benchmarks appearing daily.